Human brains process information in layers. The first layer happens fast, automatic, and mostly unconscious. Pattern recognition fires. Associations form. Emotional reactions trigger. All of this happens in the first few seconds, long before rational evaluation kicks in.

This is why traditional feedback methods struggle to capture what actually matters. When you ask someone “What do you think of this idea?” you’re asking them to report on a process they don’t have conscious access to. They’ll tell you what sounds reasonable after the fact, not what their brain actually did in the moment of first contact.

The gap between these two things is where most ideas fail. A founder presents a pitch that seems clear to them. The audience nods politely. But somewhere in those first few seconds, their brain made a snap judgment that the founder never sees. Maybe the phrasing triggered a comparison to a competitor. Maybe the framing activated skepticism. Maybe the value proposition got lost in cognitive load. None of this surfaces in the conversation because people don’t know it happened.

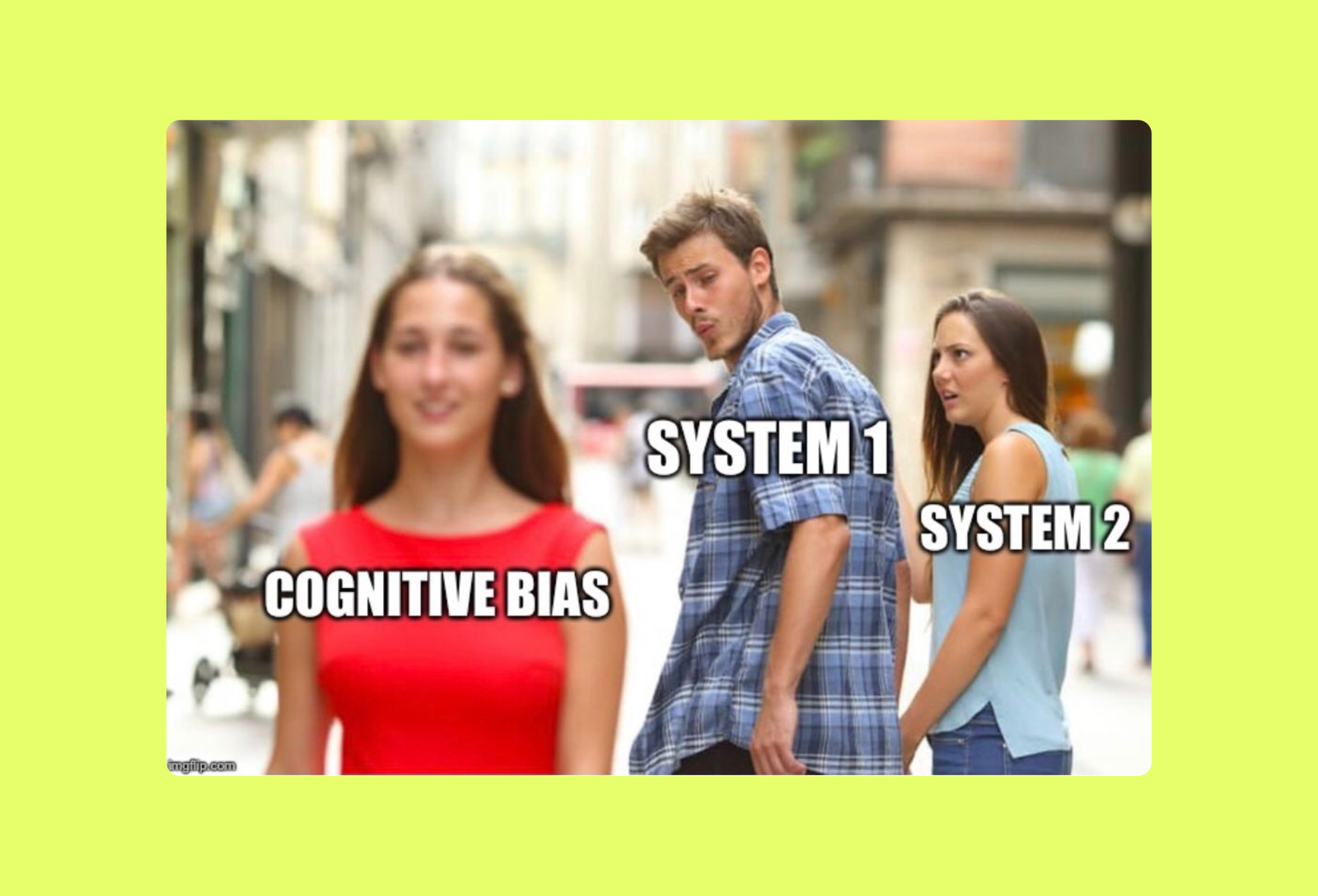

Behavioral science has documented this pattern for decades. Daniel Kahneman’s work on System 1 and System 2 thinking shows that fast, automatic judgments drive most decisions, while slow, deliberate reasoning mostly just justifies what the fast system already decided. Robert Cialdini’s research on persuasion demonstrates that people respond to specific psychological triggers they can’t always articulate. Cognitive biases like anchoring, framing effects, and the availability heuristic shape how messages land, often without anyone noticing.

The problem with asking people for feedback is that you’re asking System 2 to explain what System 1 already did. And System 2 doesn’t actually know. It makes up plausible explanations that sound good but don’t reflect the real cognitive process. This is called confabulation, and it happens constantly in customer interviews, surveys, and focus groups.

Simulated feedback works differently. Instead of asking people to report on their reactions, it models the cognitive process directly. It accounts for how pattern recognition works, what triggers skepticism, where clarity breaks down, and what objections surface before conscious thought. It doesn’t rely on self-report because self-report is unreliable. It models the underlying psychology instead.

This matters because the problems that kill ideas are usually invisible in traditional feedback. Someone tells you your pitch “looks good” but doesn’t mention that they spent the first ten seconds trying to figure out how you’re different from an existing solution. Someone says they “might use this” but doesn’t realize they’ve already mentally filed it as “too complicated to be worth switching.” Someone gives you encouragement but their brain already flagged three trust concerns they couldn’t articulate.

These aren’t conscious deceptions. People genuinely don’t know these reactions happened. But they determine whether your idea succeeds or fails. The pitch that triggers the wrong comparison loses before you get to pricing. The product description that creates cognitive overload never gets evaluated on its actual merits. The positioning that activates skepticism has to work twice as hard to earn trust.

Traditional feedback captures the output of this process: thumbs up or thumbs down, vague reactions, polite interest. Behavioral modeling captures the process itself: where the mental model breaks, what associations form, which psychological patterns activate. That granularity is the difference between knowing something didn’t work and knowing exactly why.

Research on cognitive load shows that people can only hold a limited amount of information in working memory at once. When a pitch or product description exceeds that capacity, comprehension drops off sharply. But people won’t tell you “I got cognitively overwhelmed in the second paragraph”. They’ll just say “It seems complicated” or “I’m not sure I get it”. Simulated feedback can pinpoint the exact moment capacity was exceeded and what information could be cut or restructured.

Studies on loss aversion also show that people weigh potential losses more heavily than equivalent gains. This means if your pitch inadvertently activates loss framing, you’re fighting uphill even if your value proposition is strong. Traditional feedback won’t surface this because people process it unconsciously. Behavioural modelling can identify when and how loss aversion gets triggered.

The anchoring effect shows that the first piece of information people encounter disproportionately influences their subsequent judgments. If your opening line anchors people to the wrong comparison point or price expectation, everything after that gets evaluated through a distorted lens. You won’t see this in a focus group because it’s a cognitive bias, not a conscious opinion. But it absolutely affects whether your idea lands.

Social proof research has proven that people look to others’ behaviour to guide their own decisions, especially under uncertainty. If your messaging doesn’t provide clear signals of validation or adoption, skepticism increases even if your product is objectively strong. Traditional feedback might tell you people are “hesitant” but won’t show you that the absence of social proof cues is the specific blocker. Simulated feedback models these psychological dependencies explicitly.

The mere exposure effect demonstrates that familiarity breeds preference. This creates a structural disadvantage for new ideas because they lack the comfort of recognition. If your positioning emphasizes novelty without addressing the discomfort of unfamiliarity, you’re triggering resistance. People won’t tell you “This feels too new and therefore risky”, they’ll say “I’ll think about it” and never follow up. Behavioural modelling accounts for this psychological friction.

Real feedback is essential for validation, but it works best when the concept has already been refined. You talk to customers after you’ve removed the obvious psychological friction points, not before. You run user tests after you’ve fixed the clarity problems, not as a way to discover them. You pitch investors after you’ve addressed the unconscious objections, not hoping they’ll tell you what those objections are.

The sequence matters. Test behaviorally to catch systematic cognitive problems. Then validate with real people to confirm your solutions work. Doing it backward means you’re exposing rough drafts to audiences that form lasting first impressions. Those impressions stick even if you later fix the problems.

Psychologists have shown that first impressions form in milliseconds and are remarkably persistent. Subsequent information gets filtered through that initial judgment rather than replacing it. This is why “we’ve updated our positioning” rarely fixes a launch that landed poorly. The brain already filed you in a category, and it takes exponentially more effort to change that classification than to get it right the first time.

Simulated feedback doesn’t replace intuition or judgment. It supplements them by showing you blind spots. You know your idea deeply, which is both an advantage and a disadvantage. The advantage is comprehensive understanding. The disadvantage is the curse of knowledge: you can’t unsee what you know, which makes it nearly impossible to experience your idea the way a newcomer would.

Cognitive scientists call this the curse of knowledge. Once you understand something, you can’t remember what it was like not to understand it. This makes you a terrible judge of whether your explanation is clear to someone encountering it fresh. Simulated feedback provides that fresh perspective systematically, not by asking you to imagine being someone else, but by modeling how someone else’s cognitive processes would actually engage with your message.

The goal isn’t artificial validation. The goal is to see your idea through the lens of realistic psychological responses before those responses happen in contexts you can’t control. To identify where your framing triggers the wrong mental model. To spot when your value proposition gets buried in complexity. To understand which objections will surface not because people are difficult, but because that’s how brains work.

Validation will always require real human engagement eventually. But that engagement is far more productive when you’re testing a refined concept rather than discovering basic problems in real-time. You get better feedback because you’re asking the right questions. You iterate faster because you already fixed the obvious issues. You launch with more confidence because you’ve already pressure-tested the psychology.

The companies that validate effectively aren’t the ones who talk to the most customers. They’re the ones who show up to those conversations with concepts that have already survived cognitive scrutiny. They’ve already removed the friction points that would’ve derailed the discussion. They’ve already addressed the unconscious objections that would’ve created hesitation. They’re not hoping for validation. They’re confirming that their refinement process worked.

That’s what separates testing from guessing. Testing means exposing your idea to realistic psychological models and seeing where it breaks. Guessing means hoping your intuition is right and finding out later when the cost is higher. Both approaches eventually get you feedback. One just gets you there with far less waste.

Test your concepts against behavioral psychology before they meet real audiences. Try Persocrat.